The Case Against DNP3 SAv6 and AMP

DNP3’s new security protocol reinvents what TLS already provides, and it will be implemented by vendor teams that have historically struggled to safely parse basic DNP3 messages. Use TLS. It’s proven, audited, and your developers don’t need to be cryptographers.

I sell a commercial DNP3 library. I have every commercial incentive to implement DNP3’s latest security protocol. Every layer of bespoke protocol complexity raises the barrier to entry for competitors and makes users more dependent on library vendors like me. I could implement SAv6 and charge a premium for it.

But I won’t do it. I get asked about SAv5 and SAv6 support by current and potential customers. This post is my answer, and the reasoning behind it. The short version: secure the IP path with TLS, segment internal OT networks, and handle authorization at the application layer without bespoke cryptography. The rest of this post explains why.

The DNP Technical Committee recently presented the latest revision to Secure Authentication (SAv6) along with a new Authorization Management Protocol (AMP). It represents another 5-6 years of work to design a bespoke cryptographic security protocol for DNP3. I think it’s time to have an honest conversation about whether this work should continue.

I’ve read the spec cover to cover, multiple times. I have deep respect for the committee members, many of whom have volunteered their time for decades to keep critical infrastructure communications working.

But dedication and domain expertise are not the same skillset as cryptographic protocol design. And the track record bears this out.

The Track Record

The committee’s first attempt at securing DNP3 was Secure Authentication version 2 (SAv2). It was followed by SAv5, published as part of IEEE 1815-2012.

In 2015, Sergey Bratus and I published a case study of DNP3 SAv5, “Bolt-On Security Extensions for Industrial Control System Protocols,” in IEEE Security & Privacy. We identified design anti-patterns that reliably lead to the kind of misimplementation that breaks security in practice: a mandatory “aggressive mode” that violated the cryptographic doom principle by forcing parsers to process untrusted data before verifying the MAC, and conflicting length encodings that let two spec-compliant parsers disagree on message boundaries, an exploitable differential in security-critical code.

But SAv5’s most damaging flaw was also its least subtle.

SAv5’s signature feature was per-user authentication. Each user had their own update key and session keys, with role-based access control at the outstation. It looked like genuine user-level security.

It was fundamentally broken. The master station generates all session keys and holds all update keys. A “user’s” key is really a master key with a user label on it. The master can impersonate any user at any time because it possesses all the cryptographic material needed to do so. Per-user keys provided audit labeling at enormous cost: N independent state machine instances, N key rotation cycles, and N times the pre-authentication parsing attack surface on resource-constrained outstations.

A single key per master-outstation association plus a user ID field provides identical security properties with a fraction of the complexity.

It follows directly from asking what possession of a key actually represents, a question fundamental to any cryptographic protocol design. Yet the committee designed, standardized, and marketed per-user authentication to utilities for seven years before anyone pointed this out. Seven years is an eternity in cryptography. And once identified, it took years more to formally deprecate it through the standards process. TLS vulnerabilities get patched across the entire internet ecosystem in days. A bespoke protocol maintained by a volunteer committee operates on a timeline of years, both to find flaws and to fix them. When something breaks in SAv6, what’s the response time? Another multi-year standards cycle?

Meanwhile, utilities evaluated multi-user SA. Procurement documents required it. Compliance checklists referenced it. All for a feature whose core security premise was broken from day one.

In 2019, TB2019-001 formally deprecated multi-user support. Adoption was limited. An EPRI interoperability demonstration in 2014 involved eleven vendors, but public evidence for broad production deployment remains thin, and the feature was deprecated before it saw meaningful field use.

Enter SAv6

SAv6, recently rebranded as “DNP3 Security Layer” (DNP3-SL) in a name change that drops the version history, makes real architectural changes. Security moves out of the application layer into a separate protocol layer between the Application and Transport layers. It uses AES-256-GCM for authenticated encryption with associated data. Challenge-response and multi-user support are gone. The Authorization Management Protocol (AMP), which is optional, handles RBAC and device enrollment separately.

On paper, SAv6 addresses the specific flaws identified in SAv5. The separate security layer should fix the cryptographic doom principle violation. AEAD eliminates the MAC algorithm zoo. Removing multi-user eliminates the complexity theater.

SAv6 is a genuinely better design than SAv5. The committee learned from the mistakes. But fundamental design flaws survived committee review, standardization, and publication for seven years. Nothing about the process that produced those flaws has changed. The same committee, the same IEEE process, the same absence of formal verification. Adding new cryptography to an unchanged process doesn’t fix the process.

Worse, SAv6 is not part of any published IEEE standard. IEEE 1815-2012 was inactivated in 2023, and the revision project P1815, originally authorized in 2015, remains an unpublished draft. It was extended through December 2025, then extended again through December 2027. The AMP Device specification is still under development as of early 2026. The committee told a DistribuTECH audience in February 2024 that SAv6 “will be submitted for ballot shortly.” Two years later, the project has been extended through December 2027. Vendors implementing SAv6 today are building against a moving target.

But publication status is not the core problem. Even if this spec shipped tomorrow, it would still be the wrong answer. The question isn’t whether the committee can produce a better spec. It’s whether the industry should be designing bespoke cryptographic protocols at all.

CISA’s February 2026 guidance, Barriers to Secure OT Communication, answers that question empirically. CISA and partners, including the DNP Users Group, conducted voice-of-customer research across critical infrastructure sectors to understand why operators aren’t adopting secure protocol variants that have existed for over two decades. Their finding:

Widespread adoption of secure communications is hindered not by a lack of technical solutions but by real-world barriers in cost, complexity, and operational risk.

CISA explicitly names DNP3 Secure Authentication as one of the secure protocol variants that remains underutilized. The barriers they document (procurement cost, deployment complexity, PKI friction, availability fears from prior bad experiences) are not problems that another bespoke protocol solves. They are problems that another bespoke protocol compounds.

The Threat Model Is Obsolete

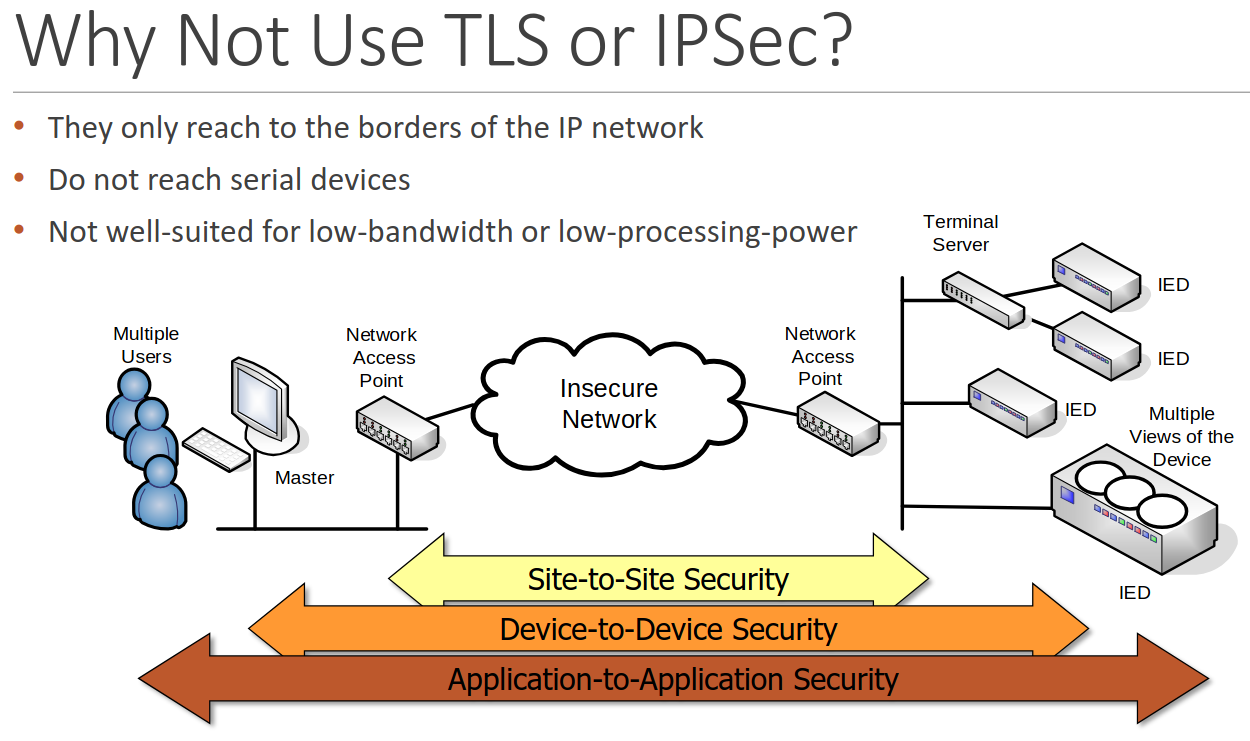

The committee’s case against TLS, as presented to the industry in 2024, rests on three claims: TLS only reaches the borders of the IP network, it doesn’t reach serial devices, and it’s “not well-suited for low-bandwidth or low-processing-power” environments.

Source: DNP Users Group, DistribuTECH 2024

In 2005, these had some validity. Serial networks were prevalent. Leased lines were the norm. Embedded processors struggled with asymmetric cryptography.

A 2021 CIGRE telecom survey of electric utilities found that legacy transport technologies, including serial channels, accounted for roughly 5% of surveyed utility telecom environments carrying SCADA-related traffic. Fifty-eight percent of respondents had begun migrating SCADA to packet-switched networks; only 9.5% reported no migration plans. Those numbers are five years old.

In 2026, the committee’s arguments have largely evaporated:

Serial networks are a shrinking edge case. Greenfield deployments are overwhelmingly IP-based. Legacy serial installations use gateways that terminate serial inside the substation and communicate over IP to the control center. The IP segment is the untrusted path that needs protection.

Commodity networks are the default. Satellite internet, cellular SCADA, and commodity IP networks are replacing private leased-line infrastructure. The untrusted network is now the norm, which is exactly the threat model TLS was designed for.

Modern embedded hardware handles TLS easily. When SA was conceived in the mid-2000s, many embedded processors couldn’t practically perform asymmetric cryptography. Symmetric-only authentication was a genuine engineering constraint, not just a design choice. In 2026, commodity embedded chipsets ship with hardware-accelerated ECC. An ECDSA P-256 verify takes ~31ms on a Cortex-M33 at 96 MHz, and even a Cortex-M0 at 48 MHz completes a TLS handshake in 2.3 seconds with a hardware secure element. The constraint that justified a symmetric-only design no longer exists, and TLS 1.3 supports lightweight cipher suites for the few devices that remain constrained, with a vastly more mature implementation ecosystem than any bespoke alternative.

The committee’s own presentation underscores the point. Slide 3 says TLS is “not well-suited for low-bandwidth or low-processing-power.” Slide 7 markets SAv6’s use of “elliptic curve algorithms to minimize processing power.” TLS 1.3 uses elliptic curve algorithms. SAv6 uses ECDH for key agreement and HKDF for key derivation in association establishment, the same primitives as a TLS 1.3 handshake. A device with the cryptographic capability to do SAv6 can do TLS. SAv6 then adds a long-lived symmetric “update key” layer on top, a design pattern from when devices couldn’t afford asymmetric operations per session. But since SAv6 already does ECDH, the constraint that justified the extra layer no longer exists. What remains is more state, more rotation lifecycle, and weaker forward secrecy than TLS 1.3’s ephemeral per-session key agreement. Mature protocol design moves toward fewer moving parts, not more.

The DER market proves the point. The DNP Users Group is marketing SAv6/AMP for the booming Distributed Energy Resources market (solar inverters, battery storage, EV chargers). But utility-facing DER communications are entirely IP-based. There are no serial links to a rooftop solar inverter. The committee is building a bespoke security stack justified by legacy serial constraints to secure a growth market that has none. IEEE 1815.2, the standardization of the MESA-DER profile for DNP3 DER communications, works with TLS today. Meanwhile, IEEE 1547-2018 specifies three allowed DER communication protocols (IEEE 2030.5, DNP3, and SunSpec Modbus), and California Rule 21 defaults to IEEE 2030.5 for investor-owned utilities, a protocol that uses TLS natively. The regulatory trajectory is toward standard TLS, not bespoke application-layer crypto.

For legacy serial links that genuinely need encryption, the problem is already solved. Bump-in-the-wire serial encryptors from multiple vendors (SEL, EngageBlack, DCB, Thales, GE/MDS) ship FIPS-validated AES encryption with explicit DNP3 and Modbus support. They retrofit onto existing serial links with zero changes to the protocol stack. Building an entire standards ecosystem around a vanishing deployment model is not justified when you can solve the problem with a box on the wire.

Substation internal security is a different problem. Protecting communications inside a physically secured substation is a network segmentation problem, not an application-layer cryptography problem. Adding cryptography to the serial link between a gateway and a relay inside a locked substation is like encrypting the bus between a CPU and its RAM. If an attacker has physical access to that link, cryptography isn’t what saves you. OT SDN provides a far stronger control: default-deny networks with strictly allow-listed communication flows, where every permitted path is explicitly engineered. A real-world deployment at a 400/110 kV substation (70 multivendor IEDs, deny-by-default network engineering) demonstrates this is not theoretical. In a static OT network, this is both feasible and more effective than application-layer authentication. It prevents unauthorized traffic from reaching a device at all, regardless of what protocol it speaks.

The actual security architecture for modern SCADA is simple:

- Control center to substation: TLS on the IP path

- Inside the substation: Physical security and network segmentation

- Access control and audit: Identity management at the application layer, decoupled from transport security

DNP3 SA tries to solve all three at the application layer with one mechanism, because the application layer is the only layer the committee controls.

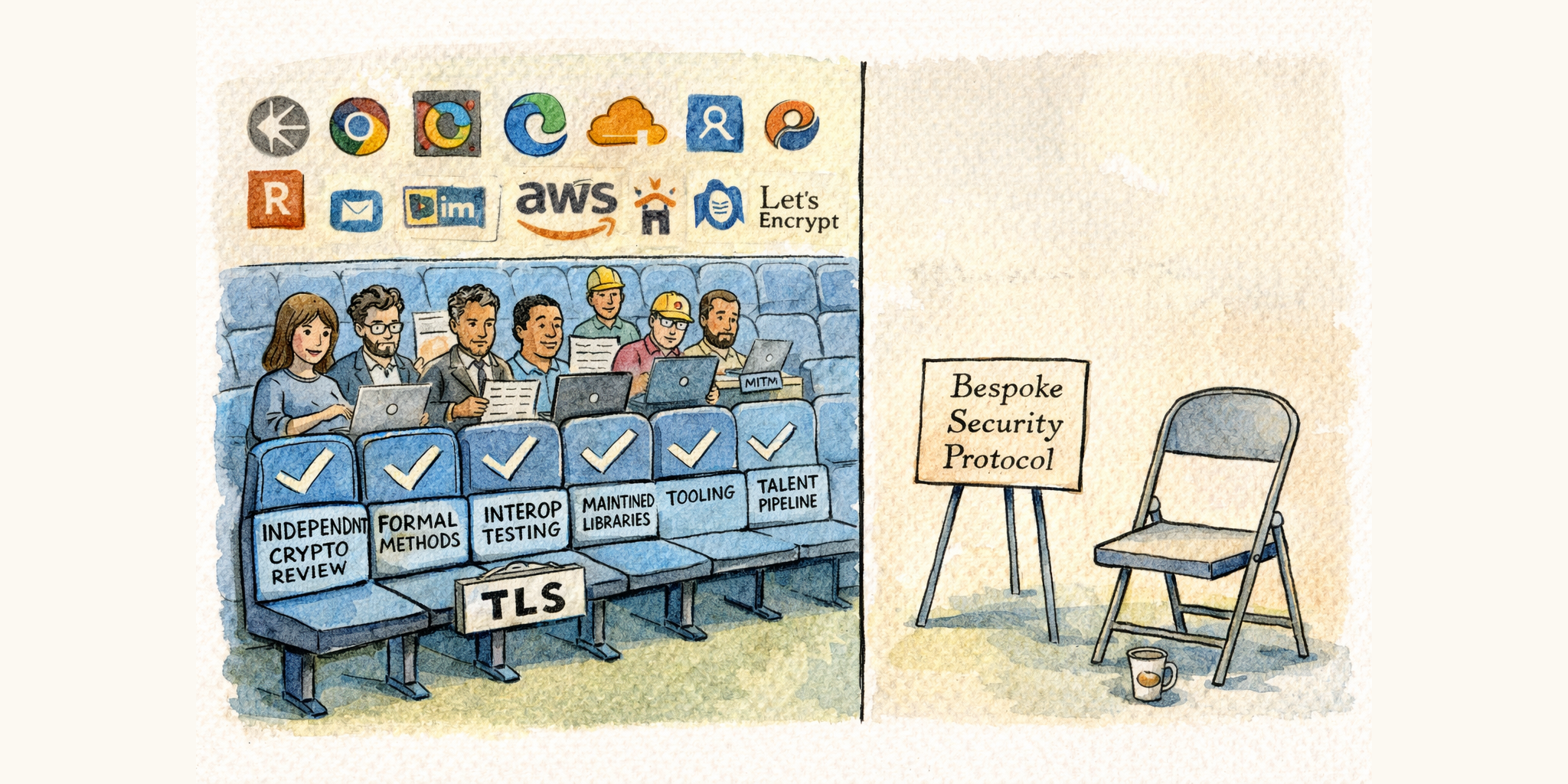

TLS Exists

TLS secures virtually every protocol on the internet. HTTP, SMTP, MQTT, gRPC, database connections, all of it. A handful of battle-hardened implementations deployed billions of times, backed by decades of formal analysis and continuously funded by every major technology company on earth.

Consider what has happened in the TLS ecosystem just since SAv5 was published in 2012, the same period the committee has spent designing its replacement:

- TLS 1.3 was published in 2018 after multiple independent formal verification efforts. It removed legacy cipher suites, simplified the handshake, and eliminated entire classes of attacks. The security bar moved up while the protocol got simpler.

- Let’s Encrypt launched in 2015 and has since issued billions of certificates, making TLS certificate management free and automated. The “certificates are too hard” objection lost most of its force.

- ACME (RFC 8555) standardized automated certificate issuance and renewal, turning what was once a manual operational burden into a background process.

- Memory-safe TLS implementations matured. rustls shipped its 1.0, was independently audited, and is now continuously fuzzed via OSS-Fuzz. Go’s crypto/tls ships with the language. These implementations eliminate entire vulnerability classes by construction.

- Embedded TLS libraries like wolfSSL and mbedTLS became production-grade for resource-constrained devices, with hardware-accelerated cipher suites on commodity chipsets.

- Certificate Transparency created public, append-only audit logs for every certificate issued, enabling real-time detection of misissued certificates.

While the committee was designing SAv6, the rest of the world was building an ecosystem that makes bespoke transport security harder to justify with each passing year.

SAv6 secures DNP3 and only DNP3. Its design, review, implementation, testing, and maintenance costs are amortized across a single protocol in a single industry. Bespoke protocol complexity is expensive at every level of the supply chain (committee design time, library vendor engineering, device manufacturer integration, utility procurement and training), and at the end of that chain you get a less scrutinized, less proven security mechanism than if everyone had just used TLS. The industry is paying more to be less secure:

| Metric | TLS 1.3 | DNP3 SAv6/DNP3-SL |

|---|---|---|

| Independent implementations | Dozens (OpenSSL, rustls, mbedTLS, wolfSSL, …) | None shipping |

| Formal verification proofs | Multiple (Tamarin, ProVerif, CryptoVerif) | None published |

| Independent security audits | Continuous, by multiple firms | None published |

| Vulnerability response time | Days to weeks | Years (standards cycle) |

| Deployment base | Billions of connections | Near zero |

| Continuous fuzzing | Yes (OSS-Fuzz, vendor programs) | None known |

When a vulnerability is found in TLS, the entire internet security apparatus responds. When a vulnerability is found in DNP3 SA, a volunteer committee convenes.

The more reasonable version of the counterargument is: use TLS for IP and SAv6 for serial. But that still means years of committee work and implementation burden across every vendor to build, certify, and maintain an entire bespoke security stack (SAv6, AMP, post-quantum extensions, a new PKI control plane) for a deployment model that accounts for roughly 5% of utility SCADA transport and is shrinking. All to secure serial links that off-the-shelf link encryptors already handle.

That addresses the split-transport case. The weaker version is to stack both on the same IP link. But for channel security, the properties are redundant (authentication, encryption, and integrity). Redundant crypto adds attack surface, not security. The main non-redundant feature people point to is application-layer access control, and you don’t need a bespoke crypto protocol for that. Modbus Security solved this cleanly: they defined a custom X.509 extension OID that carries an authorization role string directly in the certificate. TLS handles authentication and encryption, the certificate carries the role, the application enforces access control. No new cryptographic protocol, no new state machines, no new attack surface.

What SAv6 does add is risk. Every additional layer of protocol complexity introduces new attack surface and new failure modes. In OT environments where availability takes priority over confidentiality, an untested security layer that crashes or enters an unexpected state during a handshake failure doesn’t just fail to provide security. It takes down the communication channel that operators depend on to monitor and control physical processes. If TLS is compromised, you have far bigger problems than DNP3 security. If TLS is not compromised, SAv6 is doing nothing useful. It’s just exposing a brittle, unaudited parser to potential exploitation.

Our DNP3 library uses TLS via rustls, a memory-safe TLS implementation written in Rust, independently audited, and continuously fuzzed. The embedded developer using our library never touches a cipher, never constructs an IV, never manages a key schedule. They call a well-tested API and get the full benefit of the internet’s security infrastructure.

This isn’t theoretical. PJM, the largest regional transmission organization in North America, runs its JetStream system as DNP3 SCADA over the open internet, secured entirely by TLS with PKI certificates. CAISO supports DNP3 over TLS across both its private network and the public internet. These are production deployments carrying real-time grid data across the harshest network environment there is: the public internet. No bespoke crypto required.

The usual objection is that TLS certificates are too hard to manage in substations. The friction is real, but it’s misdiagnosed. The problem is not TLS or X.509 as primitives. The problem is importing the internet’s PKI trust model (public certificate authorities, OCSP responders, 47-day rotation cycles) into an environment that doesn’t need it. Internet PKI was built for trust between unknown parties at global scale. OT is the opposite: a fixed population of known devices inside a single administrative domain. That makes the right model deny-by-default with an explicit allow list of authorized counterparties, where the allow list is the real security boundary.

In that model, the right default is a private CA issuing long-lived certificates to known devices, not public-web PKI with aggressive rotation, OCSP dependencies, and constant renewal pressure. That’s already how serious operators do it: CAISO runs a private PKI for telemetry devices with long-lived certificates and an offline root. For very small deployments, a pinned self-signed certificate is just the degenerate case of the same idea: explicit trust in a specific public key, not trust in a global hierarchy.

This is also why time-based expiry is the wrong primary revocation mechanism for OT. Short-lived certificates are a workaround for weak revocation in the internet’s allow-by-default model. OT doesn’t need that workaround. In a deny-by-default system, removing an authorized identity from the allow list is revocation: immediate, total, and independent of OCSP responders or perfectly synchronized clocks. That matters because time is fragile in OT (bad CMOS batteries, GPS clock failures, NTP/PTP spoofing), and any mechanism that kills communications because a clock drifted is a liability when availability comes first. OT prioritizes AIC, not CIA. A full treatment of practical OT PKI deserves its own post.

Other protocol communities have already made this choice. The Modbus Organization faced the exact same decision (bespoke application-layer security vs. TLS) and chose TLS with X.509 certificates, including role-based access control embedded in certificates. IEC 62351-3 already profiles TLS for DNP3 over TCP/IP, as well as IEC 60870-5-104 and ICCP. The international standards body for power system communications has already standardized TLS for the same protocol the committee is building bespoke crypto for. The committee isn’t just competing with TLS in the abstract; it’s diverging from a parallel standards track within its own ecosystem.

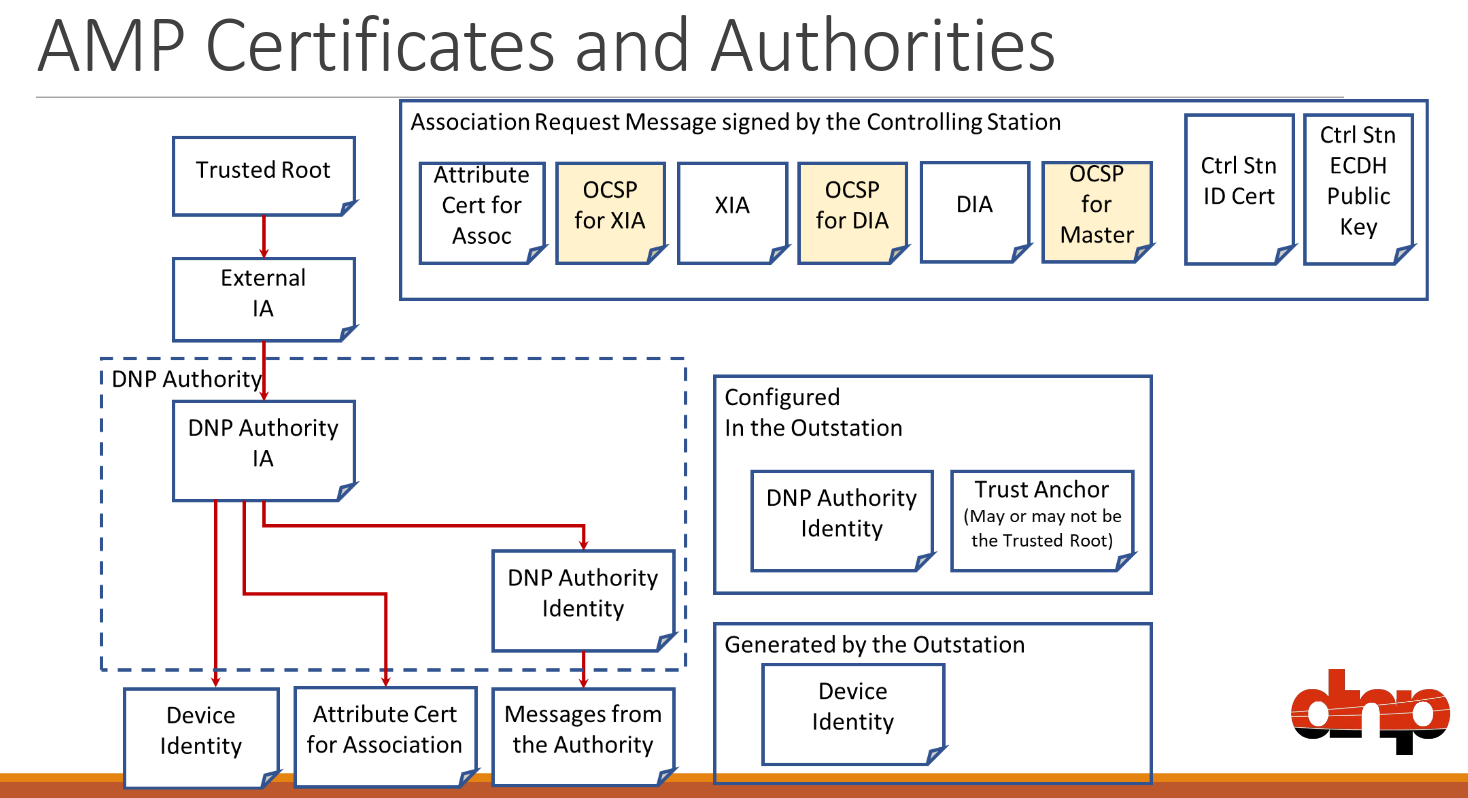

The committee’s own AMP certificate architecture illustrates the problem:

Source: DNP Users Group, DistribuTECH 2024

AMP does not eliminate PKI complexity: it reconstructs much of it inside a DNP-specific control plane. The certificates and crypto primitives are standard, but the lifecycle, status, revocation, association authorization, and session machinery are largely bespoke. The committee accepted PKI complexity anyway, then reimplemented the hard parts inside a smaller, less mature, less scrutinized ecosystem. That is worse on the axes that matter most for security engineering: review, tooling, implementation maturity, and deployment scale. AMP’s certificate lifecycle machinery is built for DNP3 and only DNP3. No other protocol community has adopted it or is likely to. Its design, implementation, and maintenance costs are amortized across a single protocol.

Building an entirely new Authorization Management Protocol from scratch is not the answer to certificate lifecycle management. The answer is recognizing that OT’s trust model is simpler than the internet’s and using TLS accordingly.

Who Reviews This?

Even if the committee has finally produced a correct specification (and that’s a real “if” given the track record), the specification is only one link in a chain. The IEEE standards process produces documents, not running code. There is no reference implementation, no feedback loop between what the committee designs and what it actually takes to build. Without that loop, there’s no pressure to simplify. Complexity accumulates by committee vote and is never checked by implementation pain. Every unnecessary feature, every optional mode, every extra state machine transition is attack surface that exists because nobody in the room had to write the code.

TLS 1.3 went through years of formal verification by professional cryptographers. Multiple independent security proofs were published. The IETF process, for all its flaws, brings deep cryptographic expertise to protocol design.

SAv6 was designed by the same committee that produced SAv5. The committee members are talented engineers who have learned some applied cryptography over the years, but none of them are cryptographers: people who design, analyze, and break cryptographic algorithms and protocols for a living. There is a real difference between understanding how to use AES-GCM and understanding how to design a protocol around it that holds up under adversarial analysis. No formal security analysis of SAv6 has been published. For a protocol intended to protect critical infrastructure, this should be a non-starter.

The issue is not merely whether formal verification exists in the abstract; it is whether it shaped the protocol before deployment. When TLS 1.3 was designed, multiple independent formal verification efforts found a serious attack in draft 10, and the protocol changed in response. The world’s best cryptographers, the people who are qualified, could not produce a correct spec without formal methods. If world-class protocol designers relied on formal methods to get TLS 1.3 right, a volunteer committee not using comparable methods should inspire much less confidence. SAv5’s first comprehensive formal analysis came after standardization and deployment, too late to change the design. A committee that had subjected SAv6 to equivalent scrutiny would trumpet the results on the first slide of every presentation. They haven’t.

The committee’s track record across three iterations suggests a persistent gap between applied crypto knowledge and cryptographic protocol design expertise. Designing a secure protocol requires deep immersion in cryptography, adversarial systems thinking, and the history of how security software fails. The committee has acquired some of this knowledge over the years, but it is not a substitute for the depth that comes from doing nothing else. This is not a personal failing. It’s a structural one.

Who Implements This?

This is the most important question, and the one the committee hasn’t yet addressed.

In 2013, Chris Sistrunk and I conducted Project Robus, a systematic fuzzing campaign against DNP3 implementations in shipping critical infrastructure devices. We found dozens of vulnerabilities, leading to a wave of CISA advisories against major vendors including SEL, Siemens, Schneider Electric, GE, and Rockwell Automation.

These weren’t exotic cryptographic attacks. They were basic parsing failures: malformed object headers crashing devices, buffer overflows in fundamental protocol handling. The implementations couldn’t correctly parse standard DNP3 messages, let alone security extensions.

In response, the committee published AN2013-004b, a 13-page application note walking implementers through how to correctly validate incoming DNP3 data, down to pseudo-code for parsing object headers and warnings about integer overflow traps. The document’s existence is itself an admission: the protocol’s parsing complexity exceeds what vendor teams can reliably handle without hand-holding.

These are the same vendor engineering teams who will now be asked to implement SAv6. The cryptographic primitives are the easy part. Vendors can call into a library for AES-GCM. The hard part is everything around it: session negotiation state machines, key lifecycle management, nonce handling, error recovery, X.509 certificate chain validation, ASN.1/DER parsing for attribute certificates, and the complex interactions between DNP3-SL and AMP. That’s where the bugs live, and that’s exactly the kind of protocol integration work that Project Robus showed these teams have not demonstrated they can do safely.

There’s a deeper architectural problem here. Most DNP3 stacks are old codebases, often written in C, never designed with adversarial input in mind. Both SAv6 and TLS protect the application layer from unauthenticated data, but they differ dramatically in where the new security code lives. SAv6 asks vendors to embed a complex new protocol layer inside these fragile codebases: session negotiation state machines, key lifecycle management, nonce handling, and error recovery, all retrofitted into stacks that were never architected for it. That’s a massive increase in attack surface within the DNP3 stack itself. Vendors who implemented SAv5 now face the prospect of supporting both versions in the same codebase, a maintenance nightmare that compounds the integration risk. TLS takes the opposite approach. All of that complexity lives in a separate, mature, heavily audited library beneath the DNP3 stack. Once the TLS handshake completes, the application sees the same interface it always did: read and write bytes on a socket. The integration cost is minimal. With SAv6, even if the code were handed to vendors for free, the effort to retrofit it into an existing stack is enormous. The trust boundary belongs around the vulnerable code, not embedded within it.

CISA’s research found the same instinct among operators: organizations wrapped legacy OT traffic in transport-layer security specifically because they did not trust existing vendor secure protocol implementations and wanted to avoid interoperability challenges.

If you’re a utility evaluating SAv6 support from your vendor, the question isn’t “do they support it?” It’s “can their engineering team build a correct security state machine on top of crypto primitives?” The track record says no.

Our library is written in Rust, a memory-safe language, and we still won’t implement SAv6. It’s unnecessary risk when TLS already exists. Most vendors will be implementing it in C. A perfect spec implemented by teams that demonstrably cannot parse binary data without buffer overflows is just a more elegant way to get compromised.

The Institutional Problem

DNP3 was designed in 1993. The installed base is enormous and will persist for decades, but that is an argument for protecting legacy deployments cheaply and correctly with TLS, not for pouring years of effort into bespoke cryptographic protocols. Every dollar and engineering hour spent on SAv6 is a dollar and hour not spent on migrating to modern architectures, or on hardening the TLS integrations that already work.

The IEEE stamp on the cover means “a committee followed the process.” It doesn’t mean cryptographic expertise shaped the design from the start. It provides institutional credibility that can actually impede security by discouraging scrutiny.

The same small group oversees security design, test procedures, and conformance certification, which weakens independence between designing the protocol and validating it. The core designers of SAv5 are designing its replacement, but the process that missed SAv5’s flaws hasn’t structurally changed. The scope keeps expanding (post-quantum cryptography is being added before the base spec ships, AMP is growing to include routing tables and multiple transport mappings) while the committee meets roughly six times a month and has been at it since at least 2017. And we’ve seen this movie before: multi-user SA was designed, standardized, put in procurement documents, and then deprecated because almost nobody implemented it. What evidence is there that the much more complex DNP3-SL/AMP will see broader adoption?

Even if the expertise gap were closed, the governance speed problem remains. A multi-year standards revision cycle is fundamentally incompatible with security protocol maintenance. TLS vulnerabilities get patched in days. A bespoke protocol maintained through IEEE balloting operates on a timeline of years. This isn’t fixable within the model.

The Paywall Problem

DNP3 is marketed as an “open” protocol, but the IEEE 1815 specification is paywalled, and the SAv6/AMP drafts are restricted to DNP Users Group members. For a data protocol, this is a reasonable business model.

In 2026, a paywalled cryptographic security protocol is disqualifying.

Cryptographic protocols survive by being exposed to adversarial review from anyone with the skills to break them. Paywalls filter out exactly the people you need: independent researchers, graduate students, curious engineers, the people who find the bugs that committees miss. IEEE has learned this lesson before, at enormous cost.

Paywalled specs breed latent vulnerabilities. IEEE 802.11’s WEP encryption (1997) used 24-bit initialization vectors and misapplied RC4 in ways that any competent cryptographer would have flagged on review. The Fluhrer-Mantin-Shamir attack broke it wide open in 2001. Its replacement, WPA2 (IEEE 802.11i, 2004), contained a nonce reuse vulnerability in the 4-way handshake that sat undiscovered for 13 years until Mathy Vanhoef published the KRACK attack in 2017. As Matthew Green noted, the IEEE spec was paywalled, contained no formal description of the handshake state machine, and the IEEE GET program only made specs freely available after six months, “coincidentally, about the same time it takes for vendors to bake them irrevocably into their hardware and software.” Meanwhile, IETF standards like TLS are freely available from day one, enabling the open scrutiny that catches these flaws before they ship in billions of devices.

The pattern is consistent: paywalled IEEE cryptographic protocols harbor latent vulnerabilities for years because the access model prevents the breadth of review that security demands. DNP3 SAv6 is following the same model. In 2026, this is not acceptable. Every TLS RFC is free. Every formal verification proof is published. Every audit report for rustls is public. That is what openness looks like for a security protocol. The entire closed-committee, paywalled-spec model must be rejected when the output is cryptography.

What I’d Recommend

For anyone evaluating DNP3 security in 2026:

Use TLS. It’s proven, it’s audited, and battle-tested implementations are available as libraries. Your developers don’t need to be cryptographers.

Invest in network segmentation. Inside the substation, OT SDN provides stronger security guarantees than any application-layer authentication scheme.

Plan your migration path. DNP3 will be around for a long time, but the trajectory is clear. New deployments should evaluate modern alternatives. Protecting legacy DNP3 with TLS buys you time without technical debt.

Be skeptical of bespoke crypto. If a security protocol is used by only one application domain and was designed by domain experts rather than cryptographers, treat it with extreme caution, regardless of what standards body published it.

Ask your vendors hard questions. Not “do you support SAv6?” but “what cryptographic library does your implementation use? Has it been independently audited? Has it been fuzzed? Can I see the results?”

DNP3 is one of the rare industrial protocols that genuinely succeeded. It became real infrastructure that has run reliably for three decades. Nobody thinks less of HTTP because it delegates security to TLS. That’s what mature protocols do. The Users Group’s highest-value role is stewardship of that achievement.

Sources

- Crain & Bratus, “Bolt-On Security Extensions for Industrial Control System Protocols: A Case Study of DNP3 SAv5,” IEEE Security & Privacy, 2015

- Project Robus — Step Function I/O

- AN2013-004b: Validation of Incoming DNP3 Data — DNP Users Group

- TB2019-001: Deprecation of multi-user Secure Authentication

- IEEE SA — P1815 project page (Active PAR, unpublished draft)

- IEEE SA — IEEE 1815-2012 status (Inactive-Reserved)

- NesCom recommendation extending P1815 through Dec 2027, Oct 2025

- DNP-UG January 2026 Membership Update

- DNP-UG Overview of DNP3 Security Version 6

- DNP-UG AMP/DNP3-SL flyer, 2026

- SAv5 vs TLS: Different Trust Boundaries — Step Function I/O

- Modbus Security — Modbus Organization

- IEEE 1815.2-2025 — DER Communications Profile (MESA-DER)

- MESA-DER — MESA Standards Alliance

- MESA-DER Cybersecurity Workshop, June 2023

- California Rule 21 / CSIP — SunSpec Alliance

- KRACK Attacks — Mathy Vanhoef

- Falling through the KRACKs — Matthew Green

- CIGRE, Survey on the Current State of Telecommunications in Power Utilities, 2021

- EPRI, DNP3 Secure Authentication Demonstration Report, 2014

- IEC 61850 Cybersecurity Overview (IEC 62351 profiles)

- Oryx Embedded STM32U3 Crypto Benchmark

- wolfSSL Benchmarks

- CAISO Certificate Policies

- CAISO RIG Certificate Install and Renewal

- Formal Analysis of DNP3 SAv5 — Cremers, Dehnel-Wild, Milner

- TLS 1.3 Formal Verification — Tamarin Prover

- SEL / ELES OT-SDN Case Study

- SEL-3025 Serial Shield

- EngageBlack BlackSER AES Serial Encryptor

- DNP Users Group, DistribuTECH 2024 Presentation — Gilchrist

- CISA, “Barriers to Secure OT Communication: Why Johnny Can’t Authenticate,” February 2026